AI is only as powerful as the information it relies on. Build it on a trusted document foundation that removes ambiguity, reduces noise, and ensures reliable outcomes.

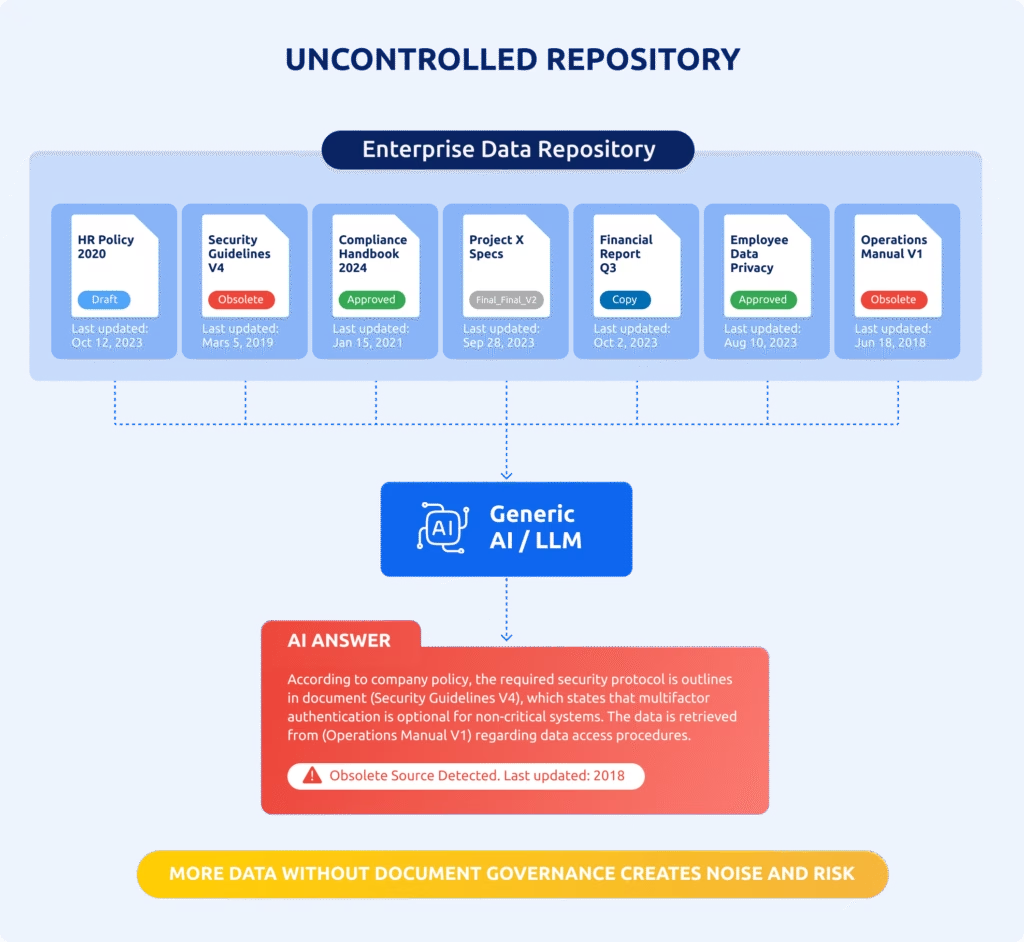

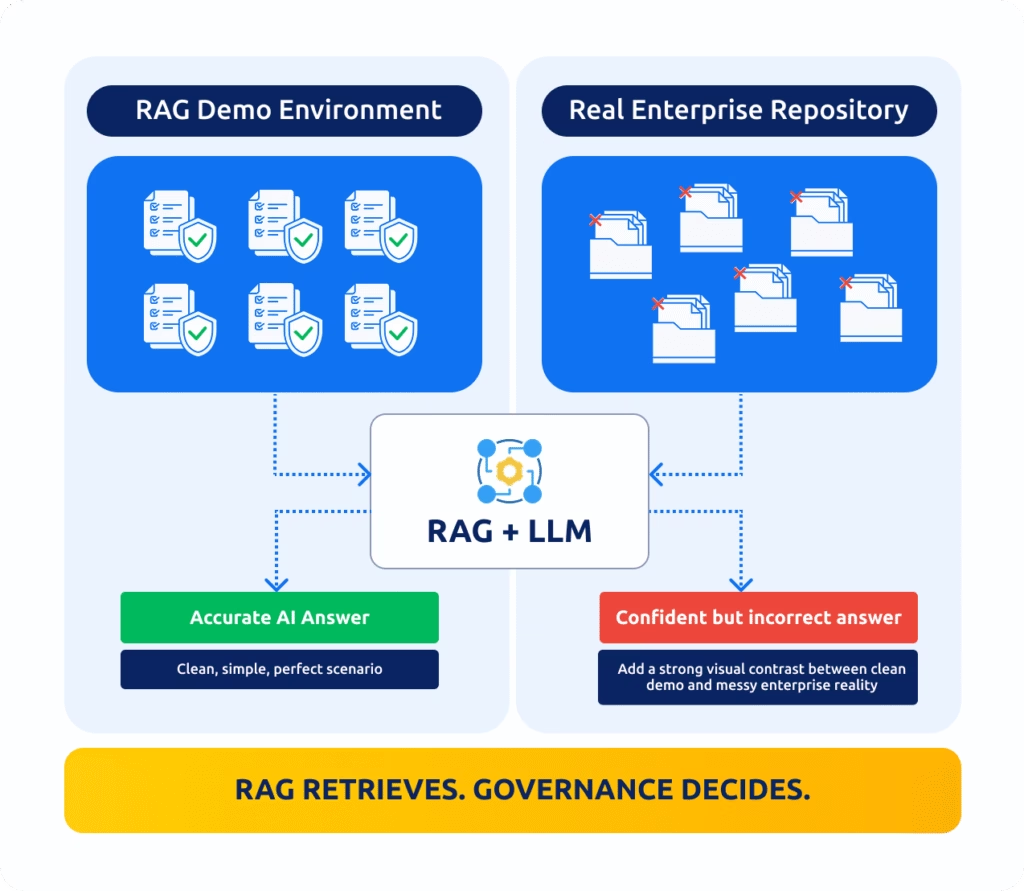

RAG demos look impressive because they rely on a small, manually curated set of clean, consistent, and approved documents.

Enterprise repositories are different as they contain:

Duplicates and conflicting versions

Drafts mixed with obsolete content

Missing or inconsistent metadata

Complex permission boundaries

RAG improves retrieval. It does not fix information quality.

A well-engineered RAG on top of unmanaged content will still generate confident but unreliable answers.

AODocs addresses the root cause: document governance.

“The more data you give your AI,

the better it will perform“

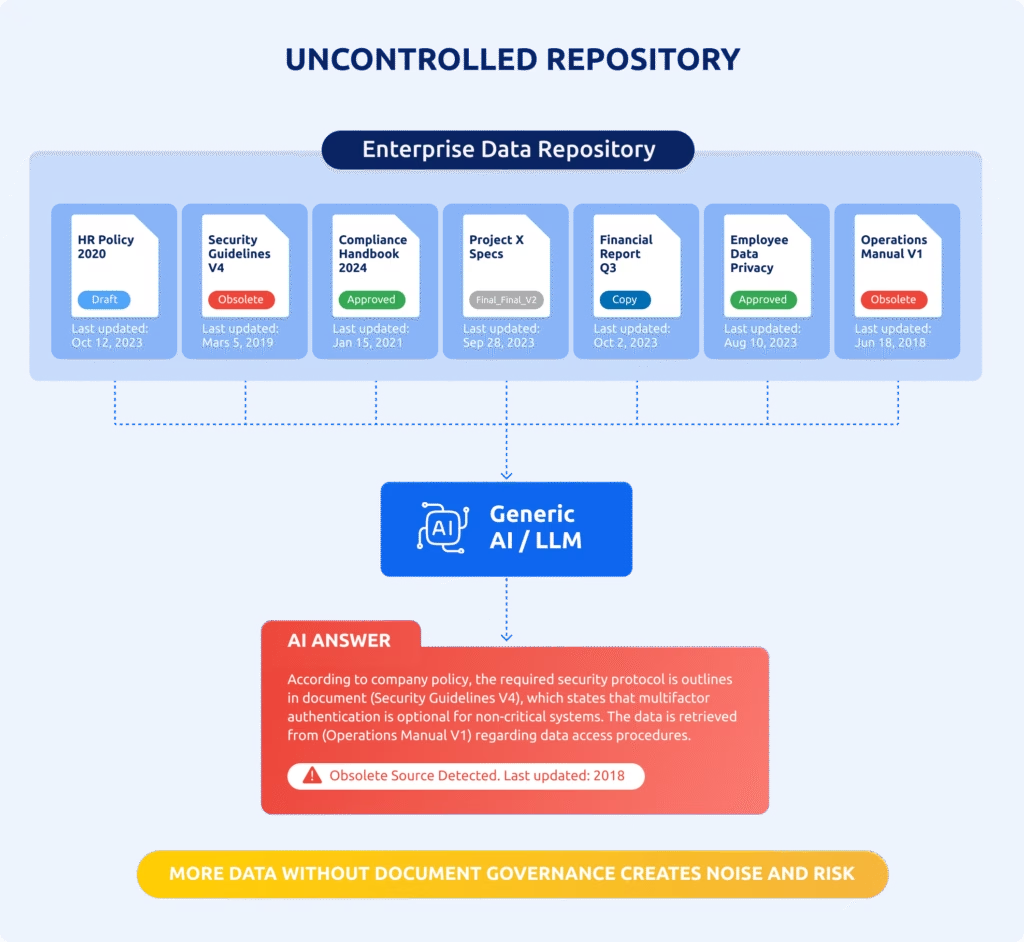

Giving your AI access to all enterprise data sources introduces noise, contradictions, obsolete versions, drafts, unapproved content and duplicate and conflicting documents.

The AI assumes everything you give it is legitimate, even when your repository is full of outdated or invalid documents.

Example: Give it a mix of draft policies and procedures, deprecated instructions, and the approved version, it will treat them all as equally correct.

“AI is smart enough to figure out

what’s correct”

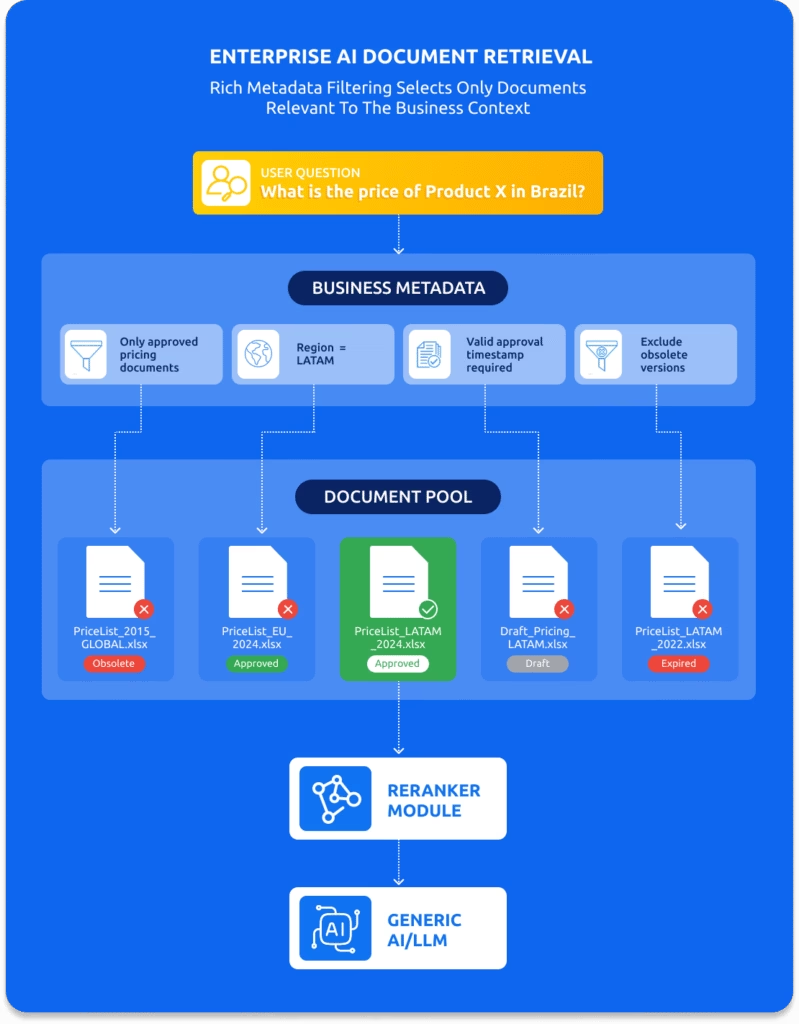

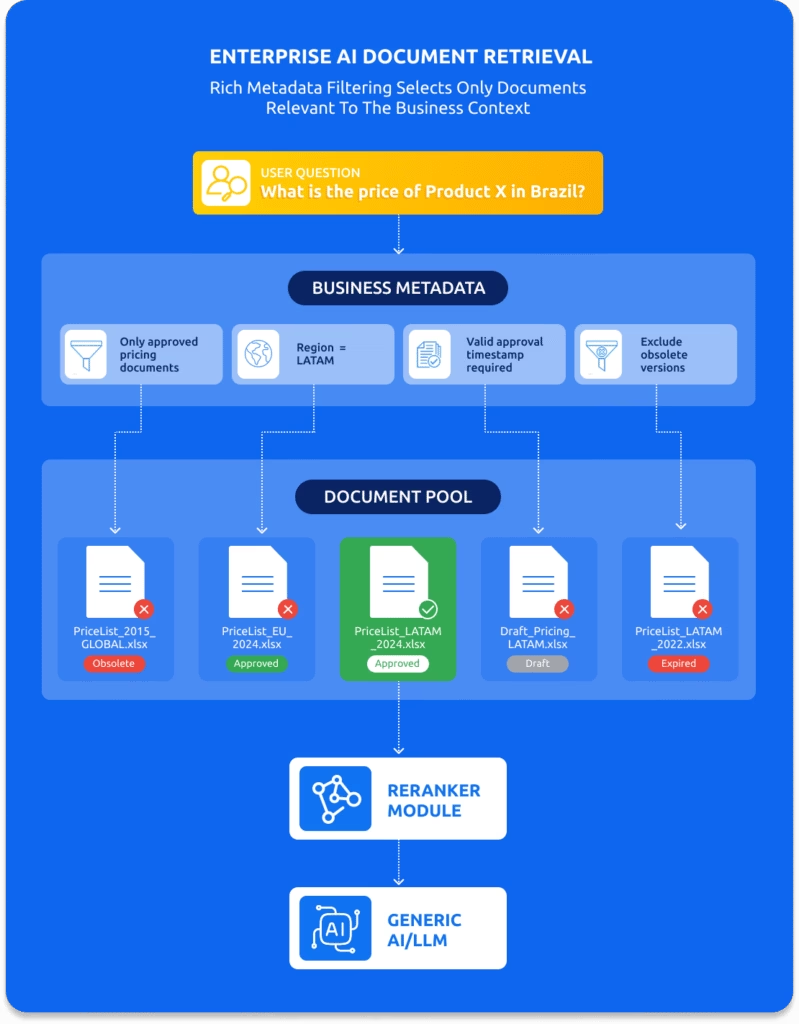

It isn’t. LLMs rank documents based on relevance to the question, not validity. Your 2015 price list, for example, is perfectly relevant to the question “What is the price of product X?”, and yet the relevant answer found in this document is completely wrong in 2025.

Without document governance, the AI will confidently generate answers that sound right, look legitimate, but are factually incorrect.

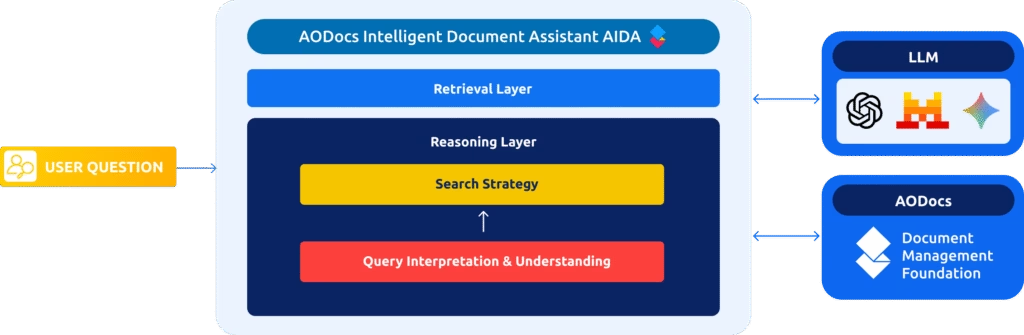

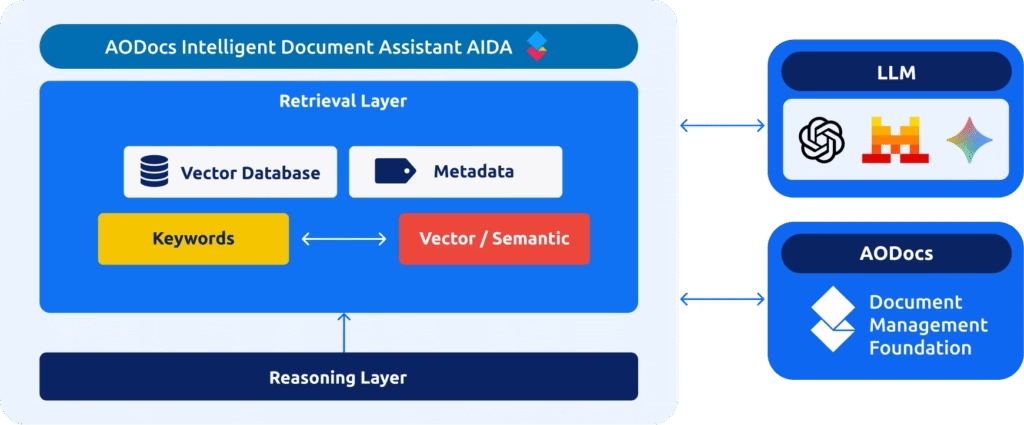

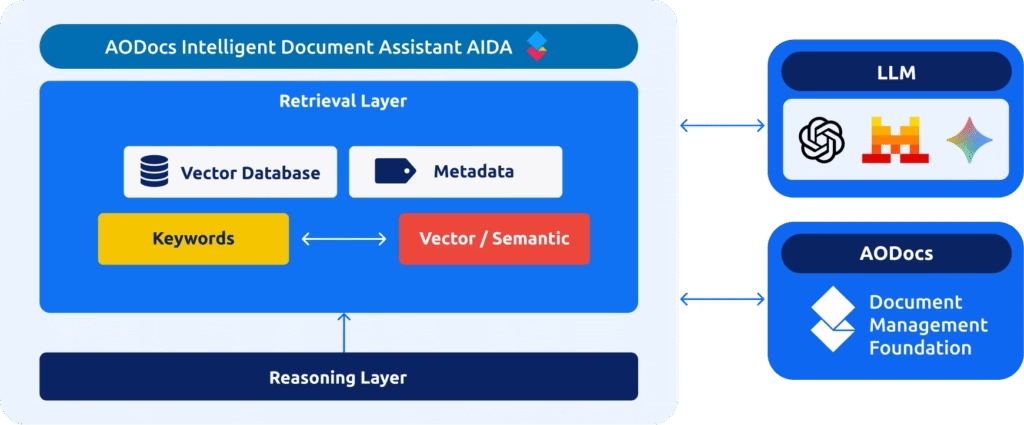

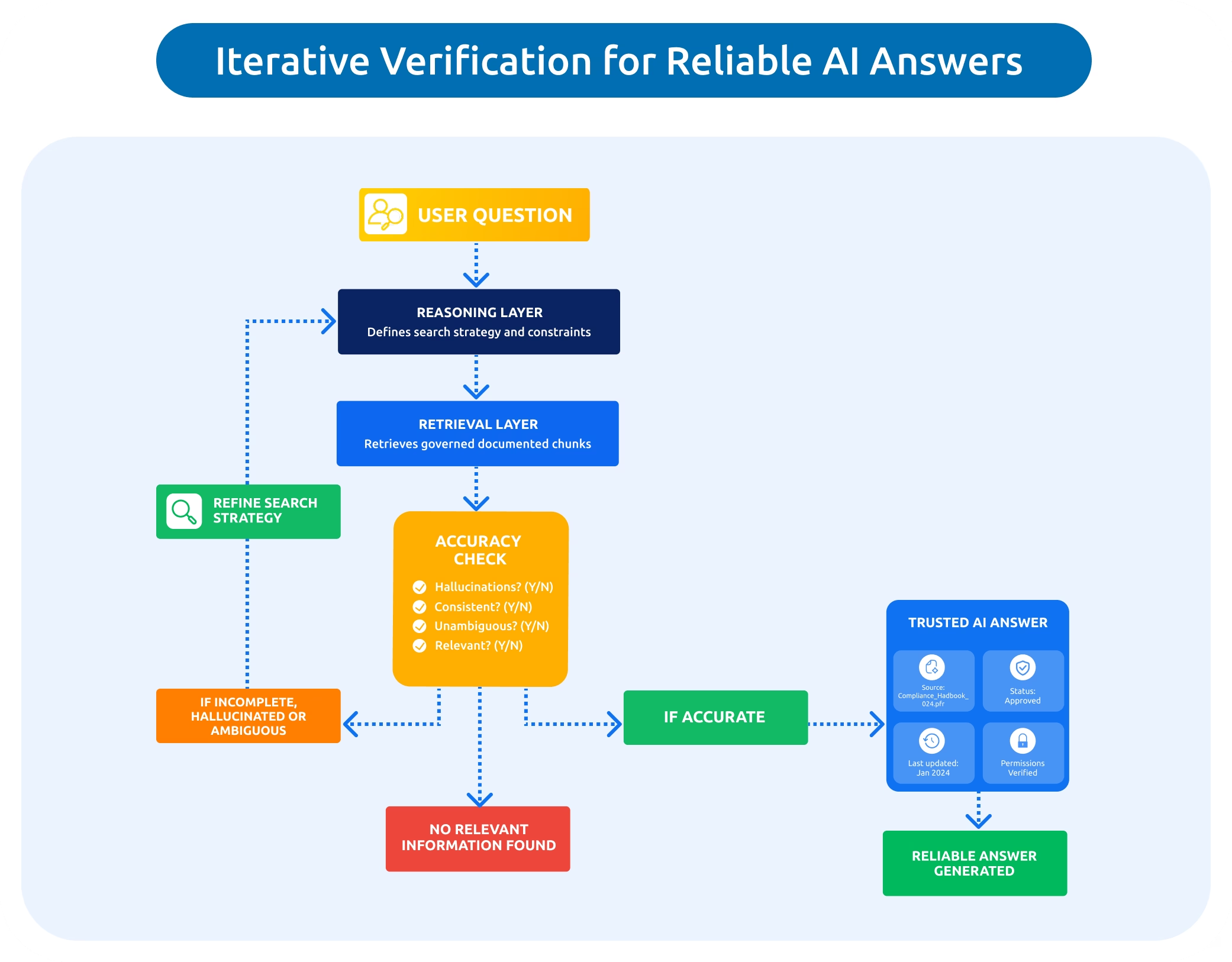

In the Reasoning Layer, AIDA – AODocs Intelligent Document Assistant:

Interprets the user intent within the governed corpus

Determines the optimal search method: vector, metadata, or hybrid.

AIDA then evaluates which metadata, permissions, and version signals matter before initiating retrieval.

In the Retrieval Layer, AIDA executes the defined search strategy across the AODocs Document Foundation, applying metadata, version history, approval status, and user permissions.

Only valid and authoritative sources remain in the candidate set.

A Reranker module then refines the results, aligning each document chunk with the actual question to ensure relevance and reliability.

Once relevant document chunks are retrieved, the Reasoning Layer evaluates whether the information is sufficient, consistent, unambiguous, and safe to use.

This iterative process – a structured chain of thought – continues until AIDA determines that:

Enough high-quality information is available to answer reliably, or

The requested information does not exist in the document base

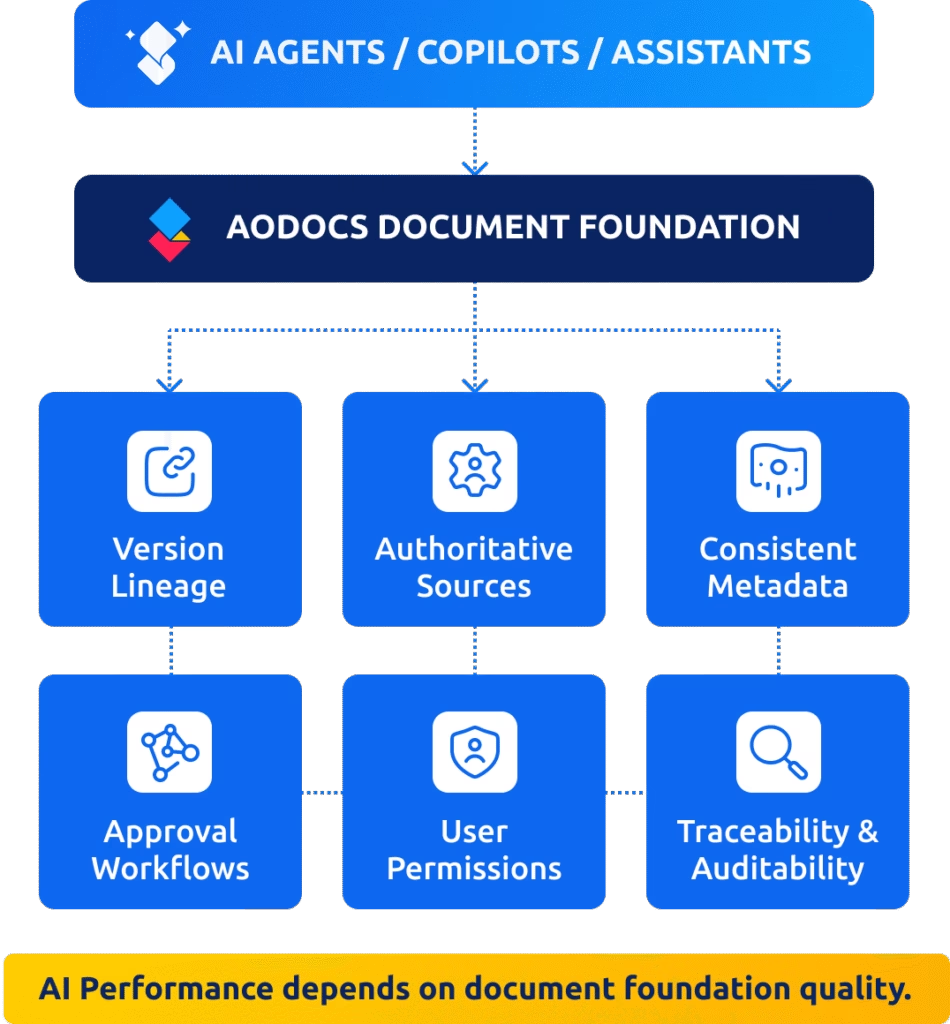

Reliable AI requires the same structured, governed environment that enables humans to work effectively:

Clear version lineage

Authoritative sources

Consistent metadata

Approval signals

Permission boundaries

Traceability and auditability

This is how AI moves from experimentation to production.

AI projects often fail for reasons that have nothing to do with the model itself. They fail because of wrong assumptions about information quality.